|

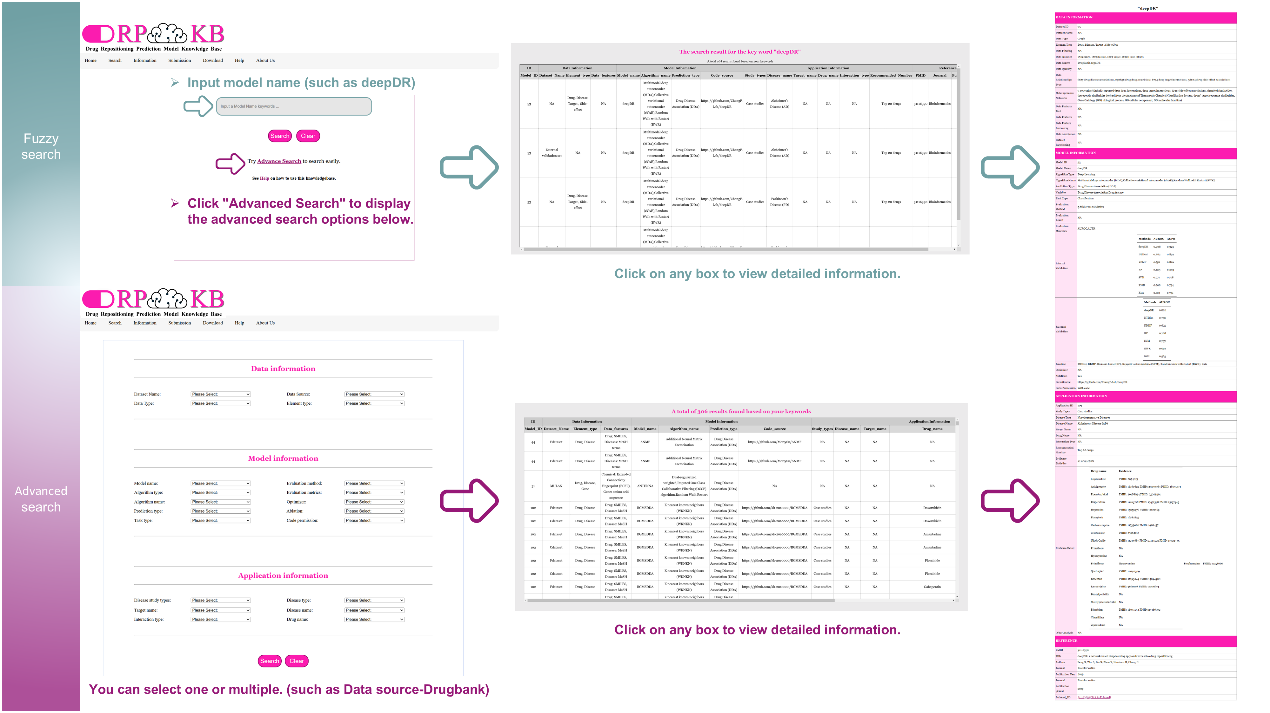

DRPMKB 1.0-Presentation interface |

| |

- The Home page is the entry point. It displays the platform's overview, goals, and value. Below, icons for Data, Model, and Application allow one-click access to related information. On the right, links to the types of models included in DRPMKB 1.0 and commonly used DR resources are listed.

- The Information page is central to the DRPMKB 1.0, offering a structured framework for data management. This page presents the entity-relationship (ER) diagram of the knowledge base and its overall data structure. It also provides detailed tables listing data, model, application and reference information, along with practical case examples in the final column for intuitive understanding. Additionally, nearly 200 links to external DR related databases are integrated, facilitating direct access to valuable resources.

- The Help page is designed to optimize user engagement with the DRPMKB 1.0. It offers concise guides on browsing, searching, and submitting data. It includes a step-by-step introduction for new users and outlines the biennial update policy.

- The About us page shows insights into the team's research background. Contact details, including the team's address, email, and website links, are also provided for collaboration or inquiries.

|

|

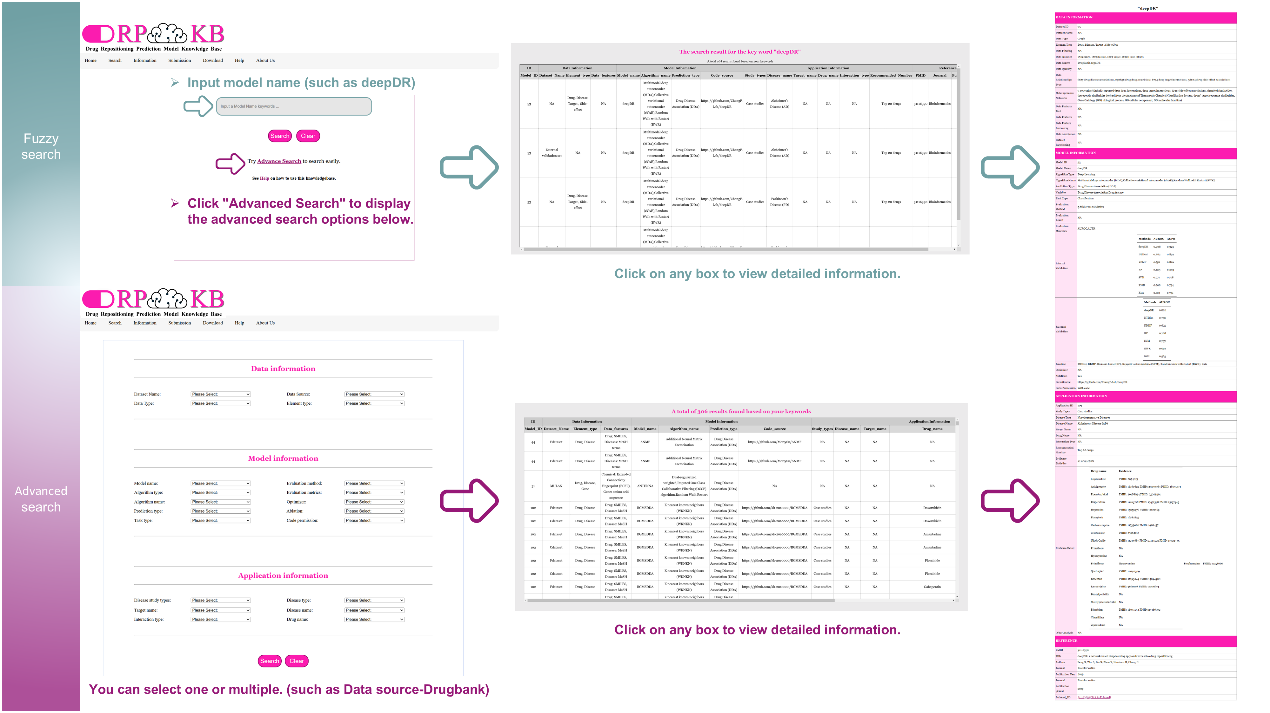

DRPMKB 1.0-Interactive interface |

| |

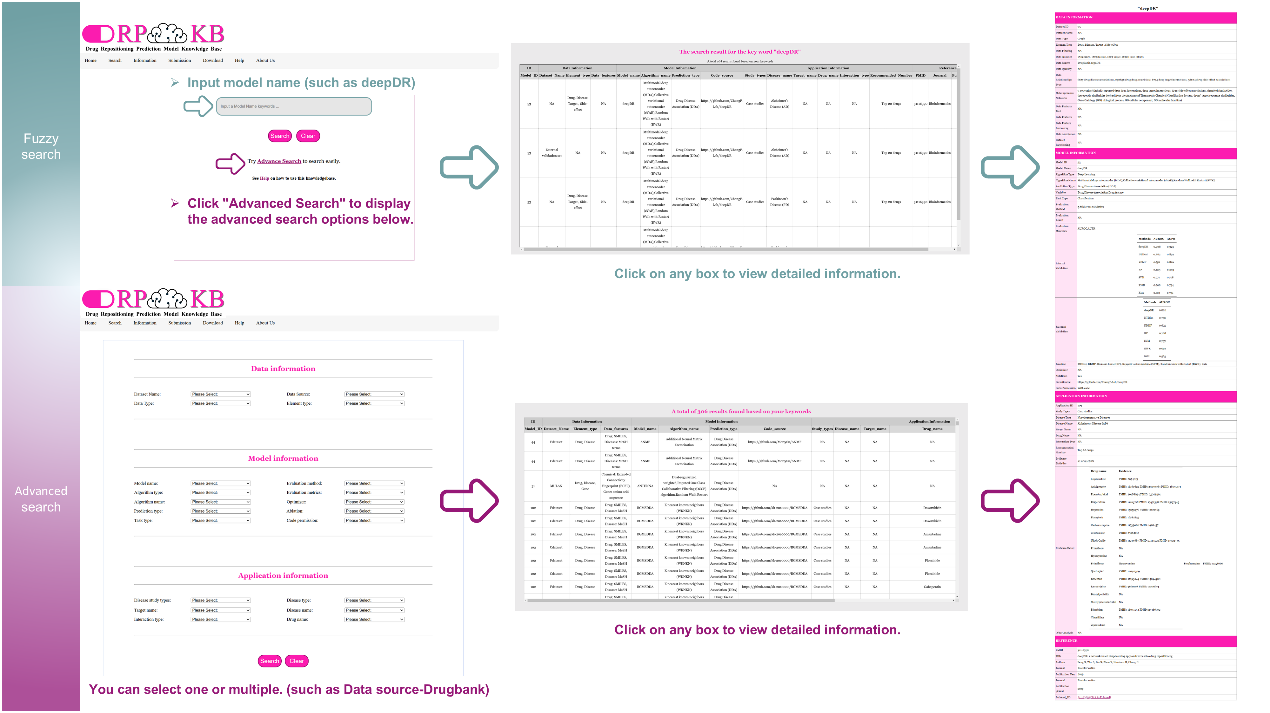

- The Search page serves as the platform's information retrieval tool, offering two search modes: fuzzy and advanced search. Fuzzy search enables broad queries by entering keywords without strict constraints, while advanced search refines results by Data, Model, and Application. This advanced search supports both individual and combined queries, helping users narrow down their searches.

- The Download page provides access to all data associated with DRPMKB 1.0. This section aims to promote data sharing and foster collaboration between researchers and professionals in the field.

- The Submission page is designed to simplify the process for users contributing to the DRPMKB 1.0. Clear inclusion and exclusion criteria are provided to maintain data quality and consistency. Once submitted and reviewed, user-contributed research results will be incorporated into the knowledge base, advancing its growth and promoting ongoing data sharing.

|

|

DRPMKB 1.0-Usage Guidelines |

|

|

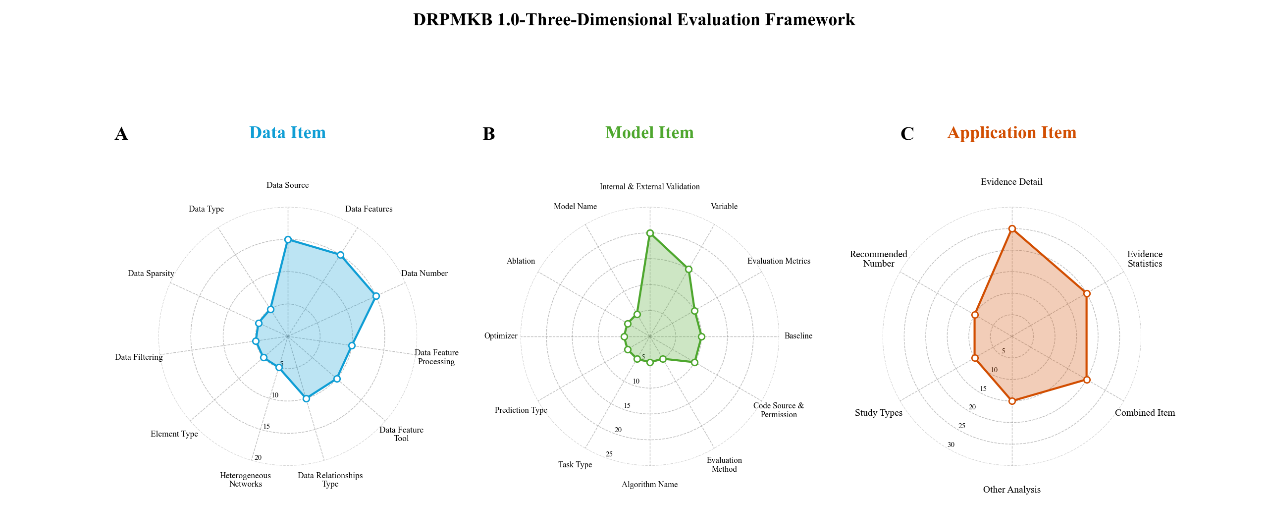

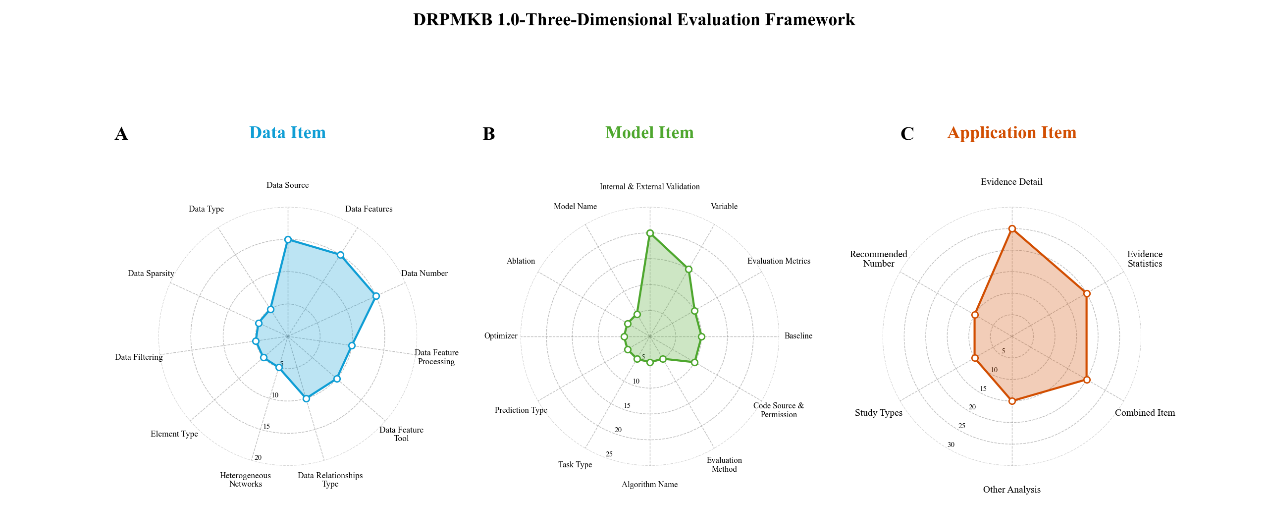

DRPMKB 1.0- Three-Dimensional Evaluation Framework for DR Models

|

| |

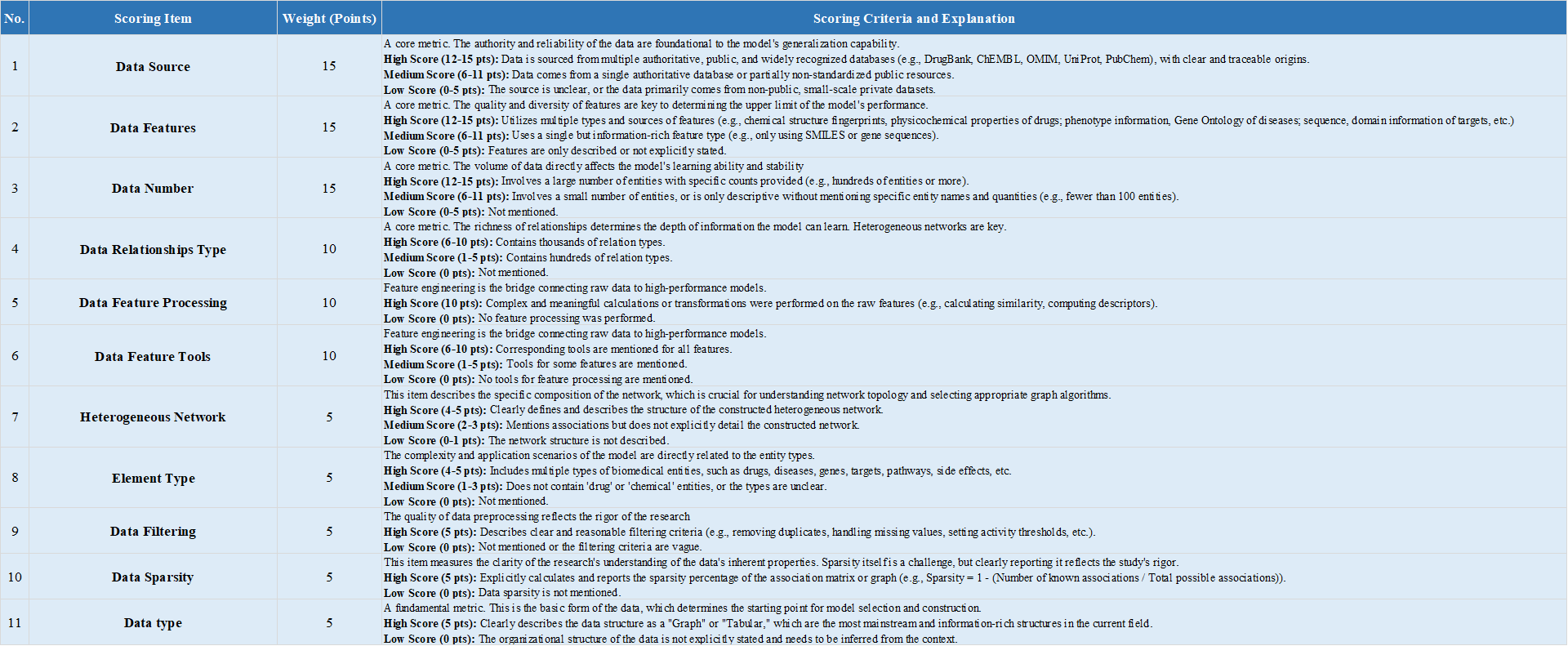

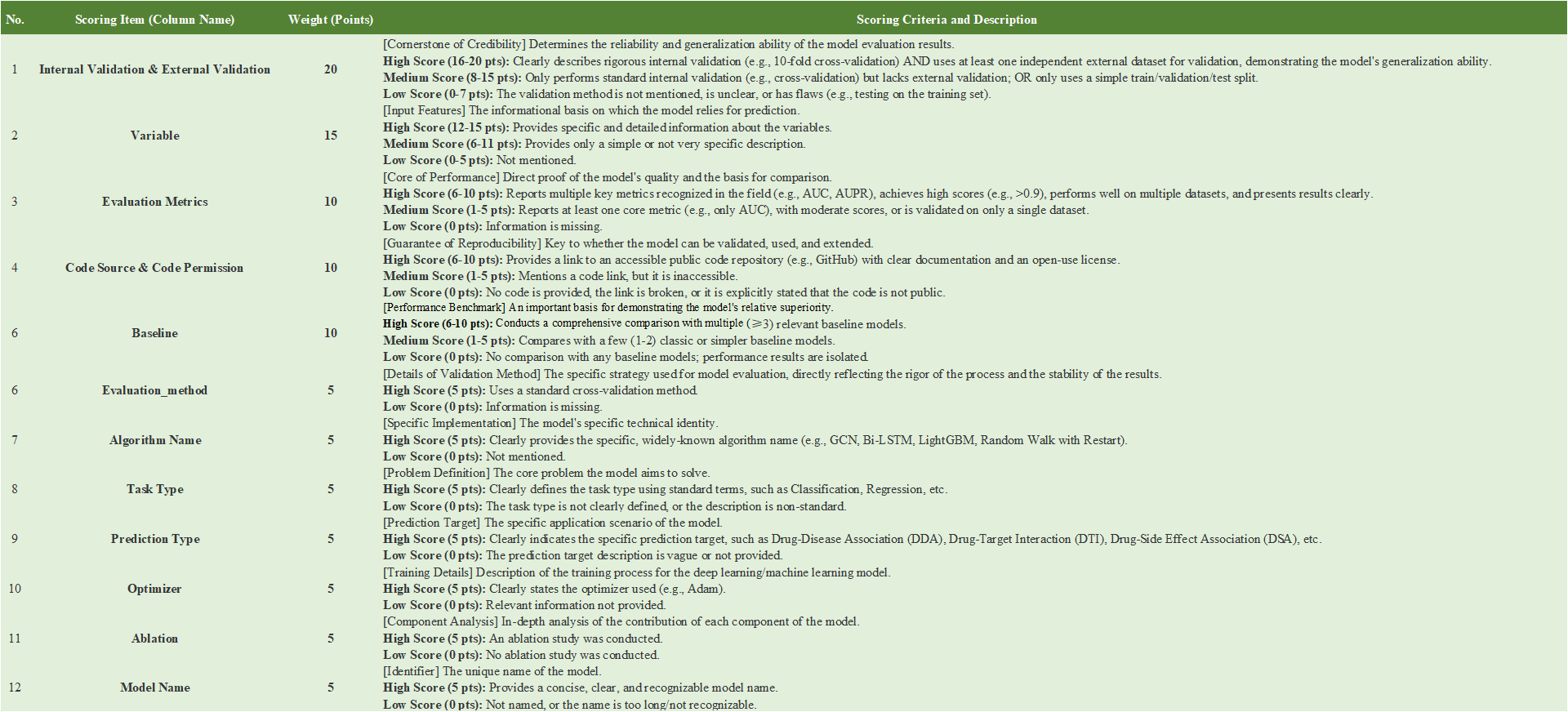

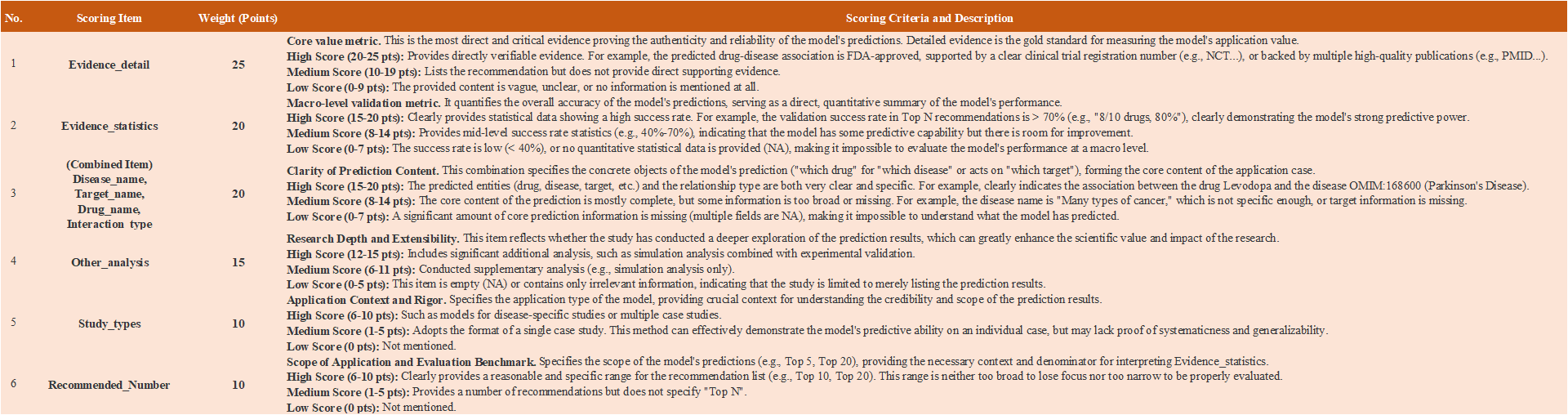

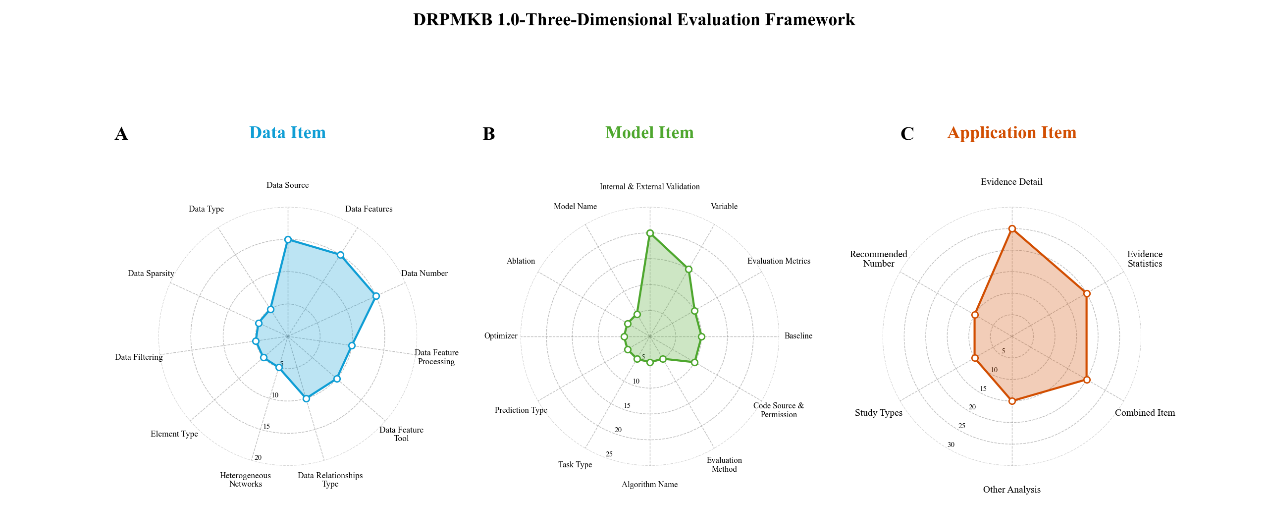

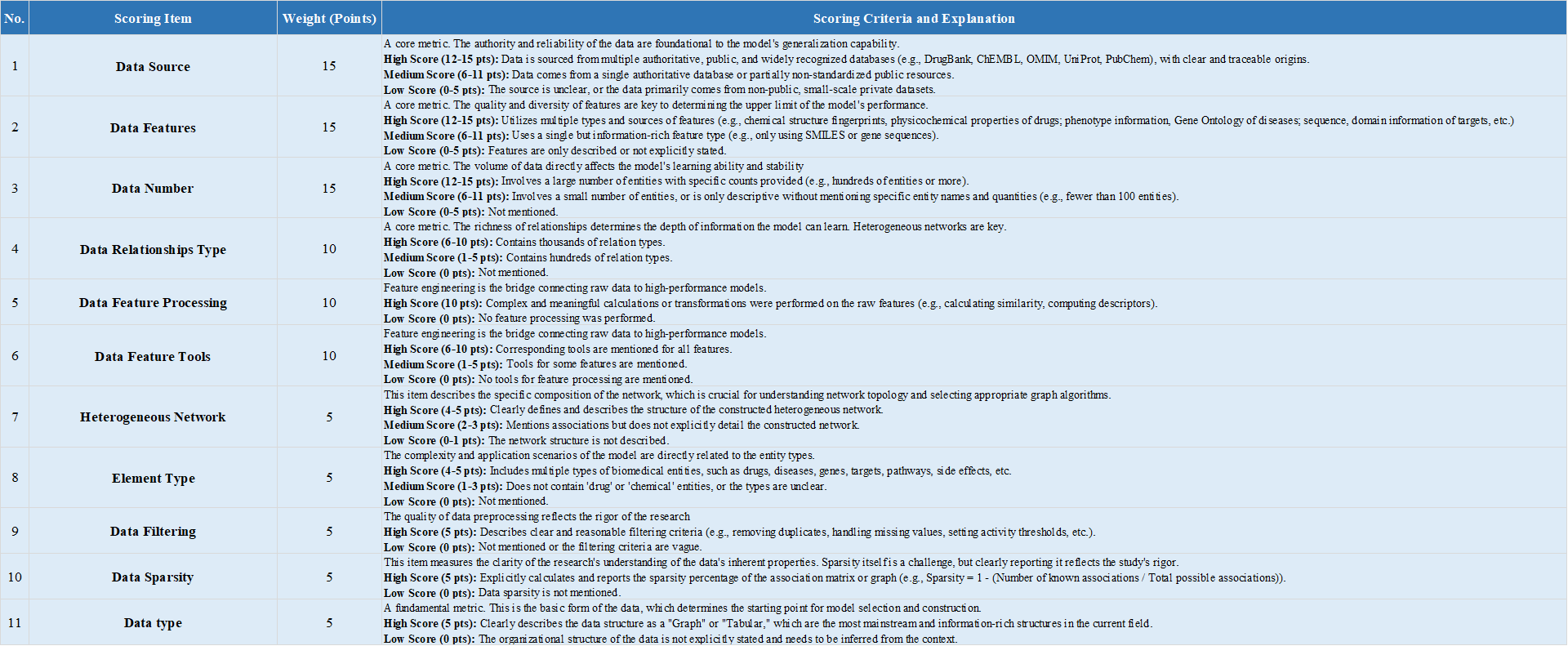

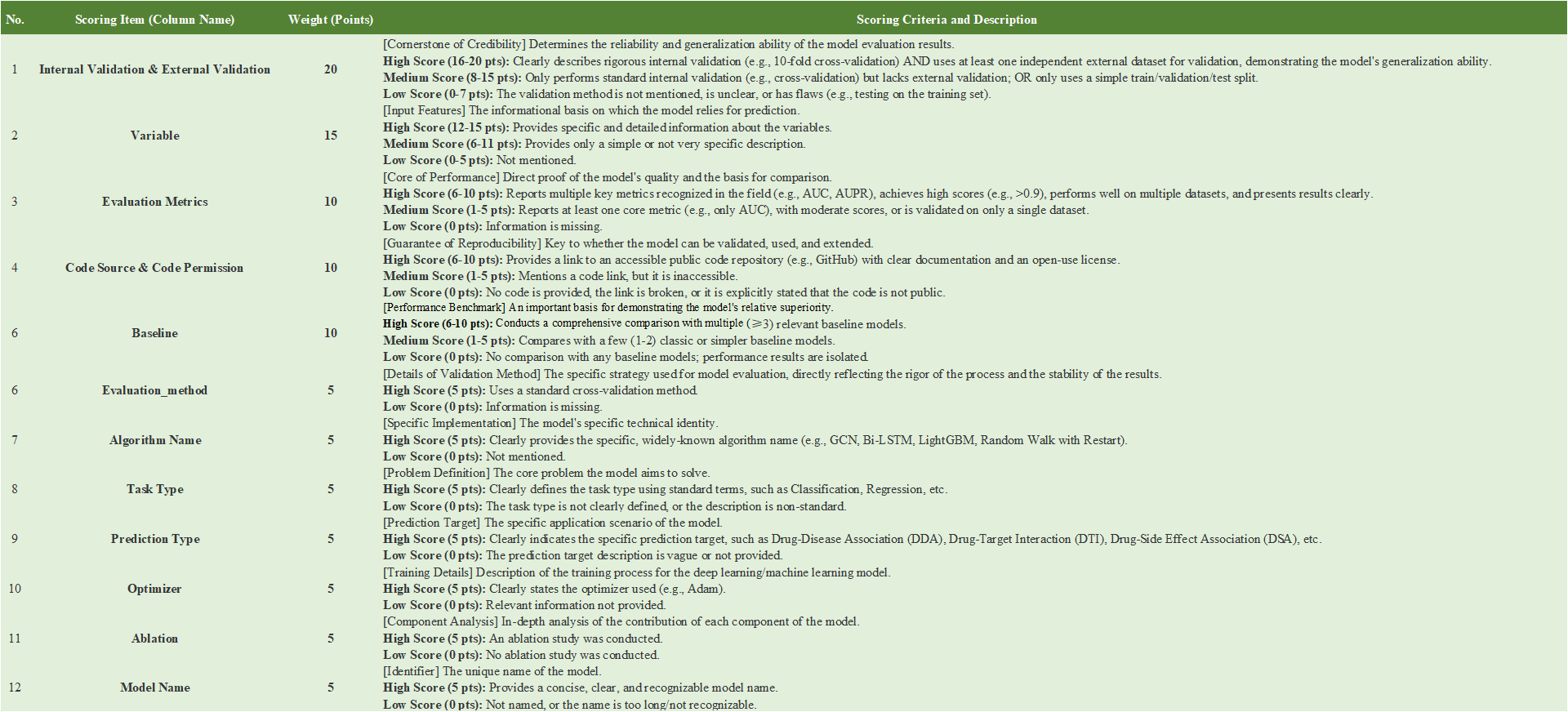

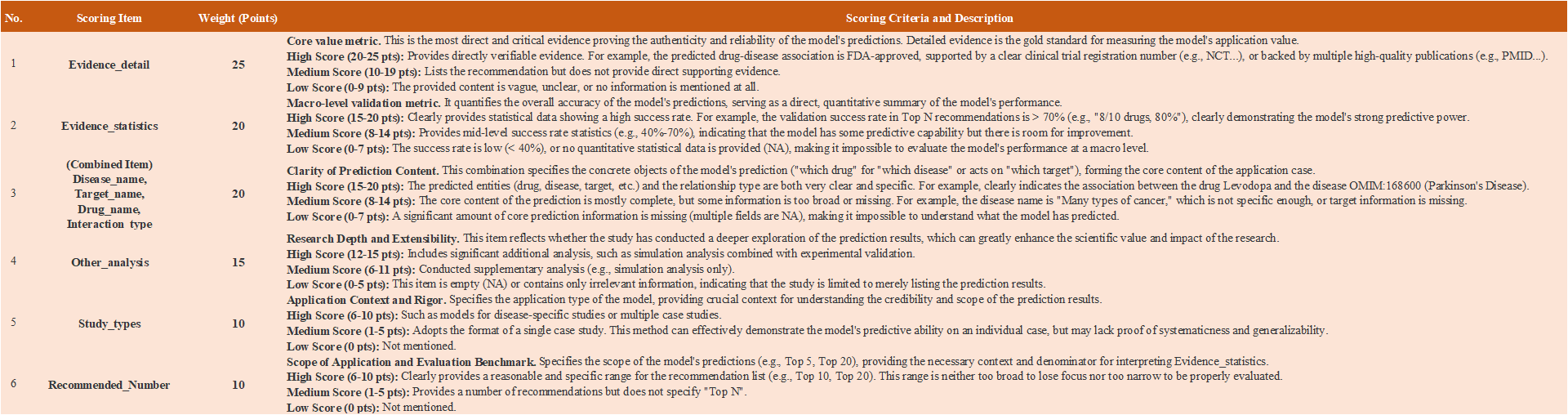

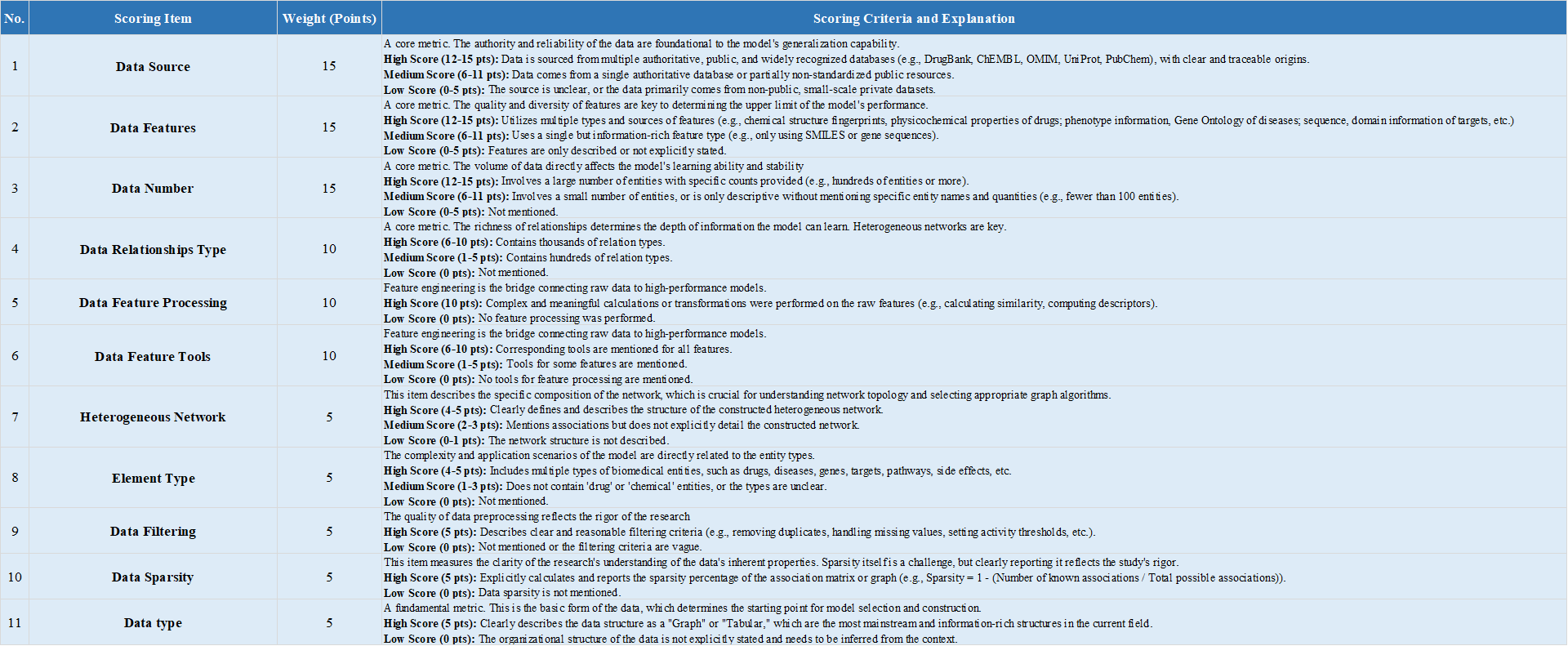

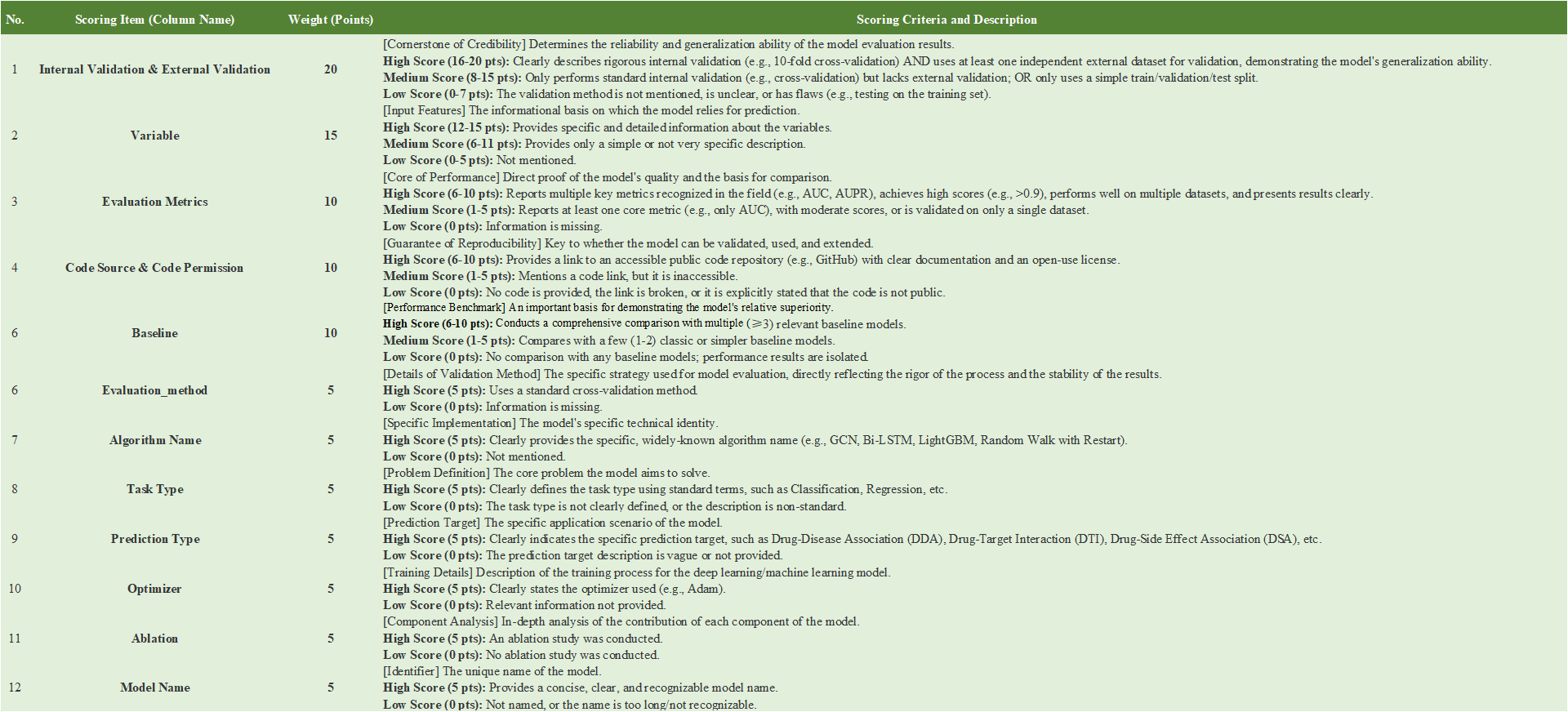

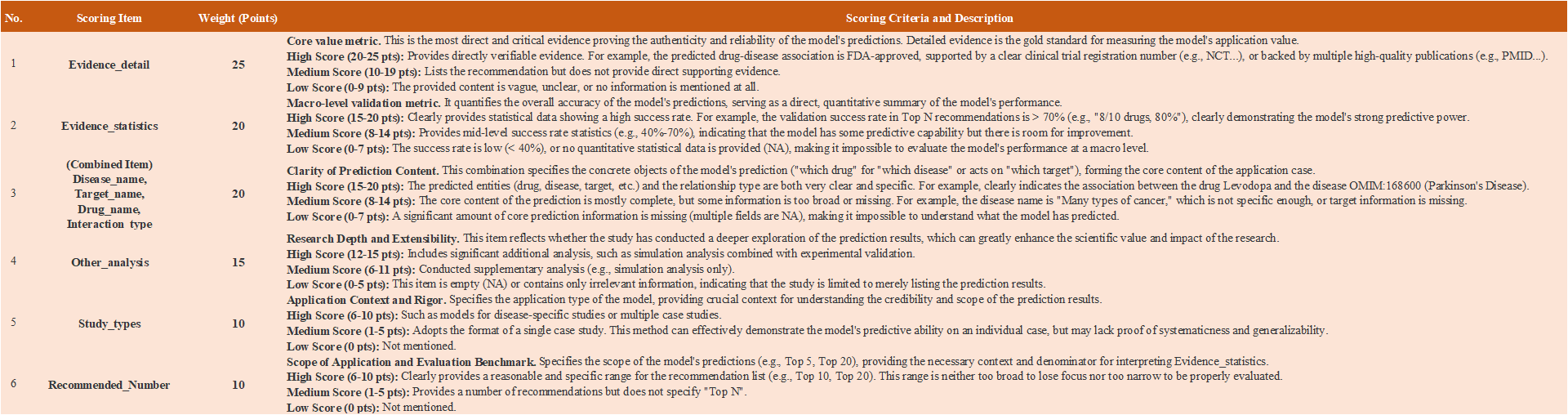

- To assess the 193 models included in DRPMKB 1.0, we built a three‑dimensional evaluation framework covering data, model, and application. Each dimension carried 100 points, weighted as data 30%, model 40%, and application 30%. The total score reflects each model's performance in data quality, model design, and application value, offering an integrated measure of scientific rigor. Scoring details are given in the Appendix. In the data dimension, 11 indicators were used to assess the reliability and diversity of inputs, including Data Source, Data Features, Data Number, Data Feature Processing, Data Feature Tool, Data Relationships Type, Heterogeneous Networks, Element Type, Data Filtering, Data Sparsity, and Data Type. The model dimension includes 12 indicators assessing the rigor, reproducibility, and robustness of model design: Internal & External Validation, Variable, Evaluation Metrics, Code Source & Permission, Baseline, Evaluation Method, Algorithm Name, Task Type, Prediction Type, Optimizer, Ablation, and Model Name. The application dimensionuses 6 indicators to capture the biomedical relevance and practical utility of predictions: Evidence Detail, Evidence Statistics, (Combined Item: Disease Name, Target Name, Drug Name, Interaction Type), Other Analysis, Study Types, and Recommended Number.

|

|

|

|

|

|

DRPMKB 1.0- Evidence Assessment Framework for Model Predictions

|

| |

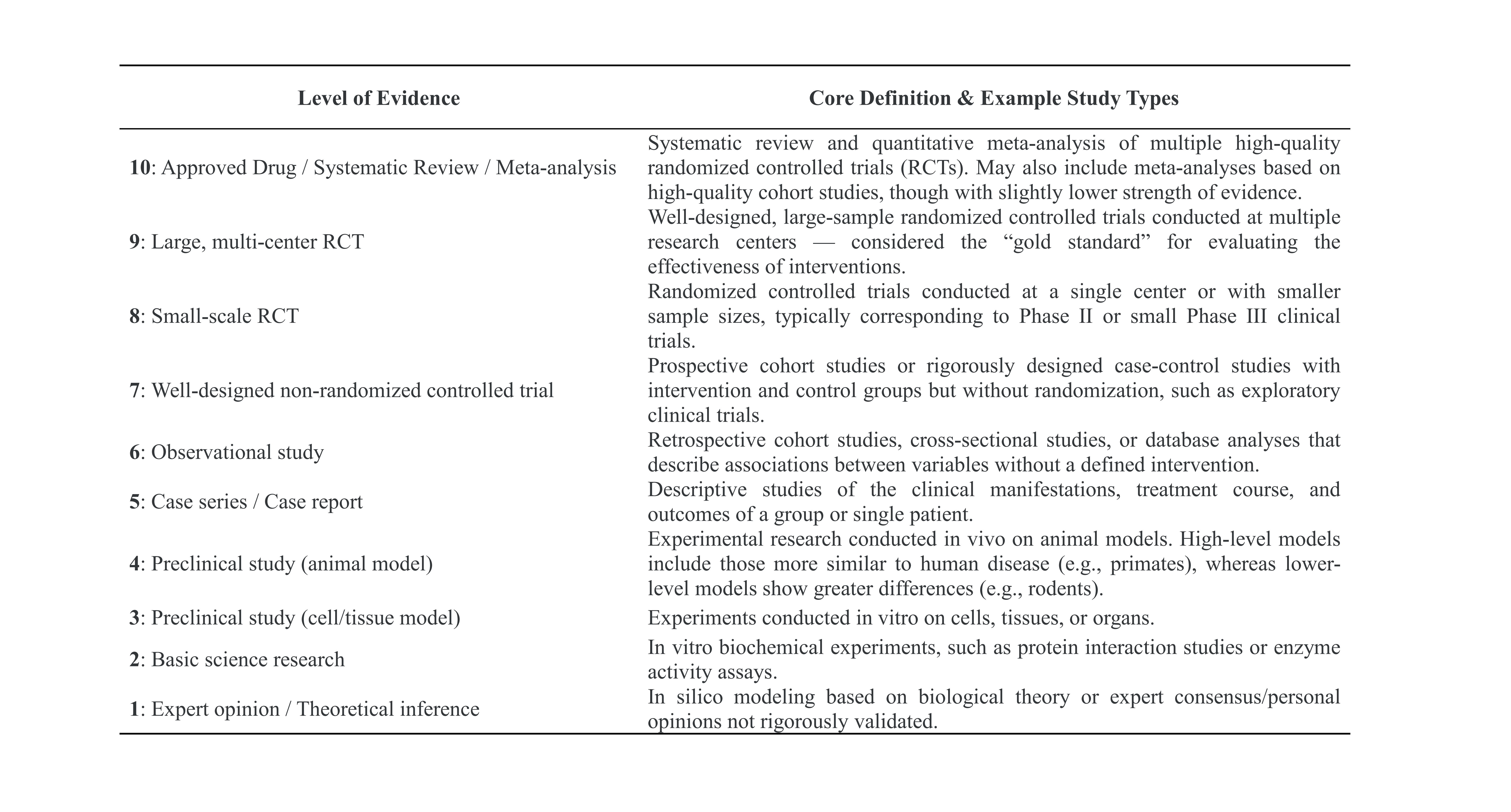

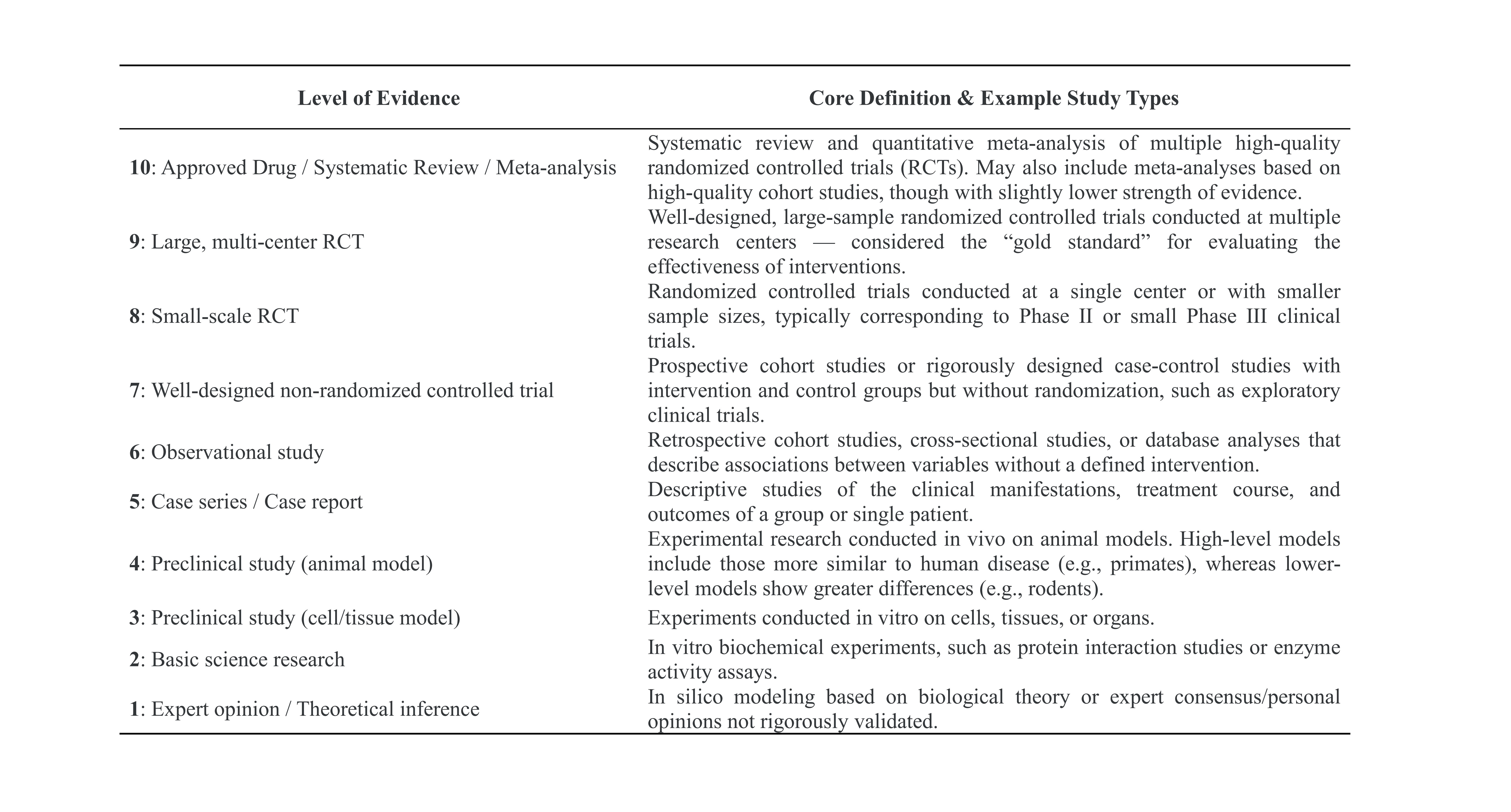

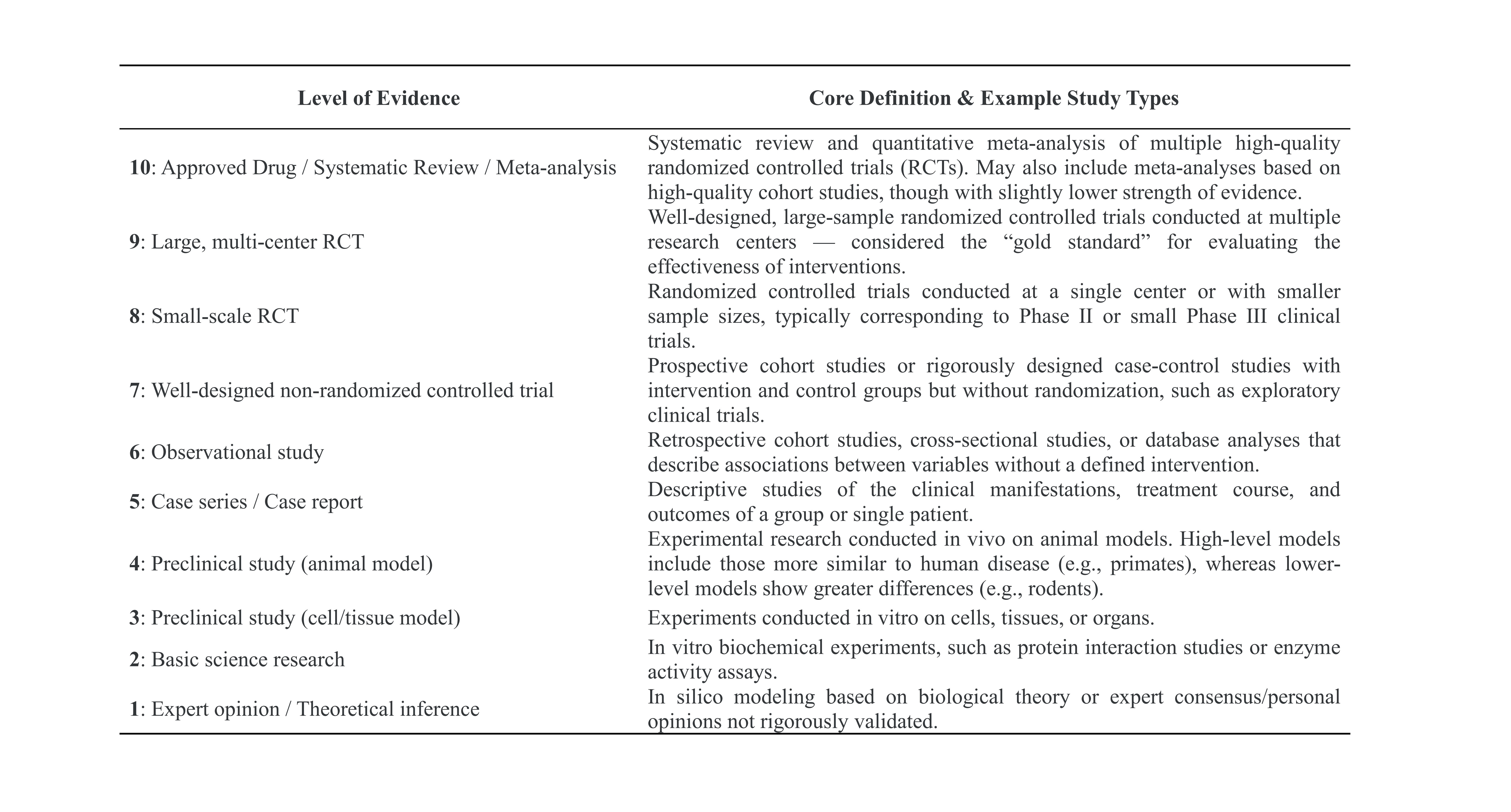

- Most DR models in DRPMKB 1.0 generate predictions for specific diseases, drugs, or targets, supported by evidence from three main sources: publications indexed in PubMed (PMIDs), annotated database entries, and accessible online resources. To evaluate the reliability of this evidence and its potential for experimental and clinical translation, we developed a Level of Evidence framework that assigns each source a quantitative score for scientific rigor and clinical relevance.

|

|

|